Hyperrhiz 11

Seriously Writing SIRI

Peggy Weil

Citation: Weil, Peggy. “Seriously Writing SIRI.” Hyperrhiz: New Media Cultures, no. 11, 2015. doi:10.20415/hyp/011.e05

Abstract: This essay discusses the evolution of chatterbots and presents as a case study the process of designing a chatbot, MrMind (1998-2014). The author argues that while writing bot is a uniquely modern, even futuristic, endeavor, the bot author draws on long-standing artistic, theatrical and improvisational traditions.

1.

For, in the first place, I have left a half a dozen places purposely open for them; and in the next place, I pay them all court.

- Sterne, The Life and Opinions of Tristram Shandy

Bots, the first native netizens, are by their very nature creatures of the emergent and variable texts characteristic of netprov. An author composing a conversational agent confronts the empty page, as well as the empty every-other-line, a one-sided conversation without the benefit of prearranged cooperation from the other side. A bot script harbors latent dialogue, the resulting dialogue contingent upon the invoked responses of an interlocutor. Although the conversation and the resulting transcripts are dialogues, and experienced as such, they are one half of a dialogue marked by a range of anticipatory, aleatory, combinatory and iterative processes. While writing bot is a uniquely modern, even futuristic, endeavor, the bot author draws on long-standing artistic, theatrical and improvisational traditions.

The word improvise derives from the Latin improvisus, meaning, literally, not foreseen or unforeseen. The spontaneous sense of the art is misleading; no matter how 'off-the-cuff' or 'on-the-fly,' an improvisation is paradoxically grounded in the native structures and forms of whatever art it is flying off to or from. The sense of 'unforeseen' applies to the individual word or note, but the end result is neither unprepared or totally unexpected. Improvisation is formality masquerading as informality; the cook who manages to 'toss off' a meal for twelve, the jazz pianist offering the virtuoso solo, and the group comedy routine, are all grounded in technique, achieved by years, if not decades, of practice, not to mention a full larder of structure. The musician adheres to the formal structure of the music, rhythm, style and key signature of the piece; comedians collaborate closely starting from a shared set of rules .

Bots are improvisers in the sense that their code is prepared but its delivery, though rule driven, is unstable. It is a form of improvisation associated with the solo artist conducting a dialogue with a live audience. This may be an audience of one or many, but more importantly, it is an unknown audience. Anyone can log on to chat, and anyone can and might say anything. The bot, like the puppeteer, the ventriloquist, the clown, the magician, the confidence man and those to whom we tell our confidences, the therapist, must also be prepared for anything. The modern, coded chat bot shares with these arts an embrace of the element of chance and combinatory literature, as each scripted combination forms a unique human-machine exchange.

ELIZA, widely acknowledged as the first bot, made her appearance in 1966 in a computer science lab at M.I.T. The creation of computer scientist Joseph Weizenbuam, ELIZA was named in reference to the Galatea/Pygmalion myth, an acknowledgement that he was teaching the inanimate machine to speak . His computer program, a natural language processor (NLP), comprised two components: a language analyzer to interpret what was said, and a script, to form a response. In Computer Power and Human Reason, Weizenbaum directly references the role of improvisation in forming the response saying, "The script is a set of rules rather like those that might be given to an actor who is to use them to improvise around a certain theme."

Weizenbaum's decision to embrace Rogerian therapy as a model for this natural language program set the course of human-machine relations into uncharted territory. Carl Rogers, a prominent American psychologist in the mid-twentieth century, is known for having developed a practice of humanistic psychotherapy characterized by a practice known as restatement; the therapist directly echoes the patient, affirming and clarifying, in order to establish an ongoing relationship of trust. Weizenbaum adapted this technique by creating code to automatically parse and reflect the user's statement back into a script to drive the conversation. Weizenbaum cites this early conversation as an example of this technique in his book, Computer Power and Human Reason.

User: Men are all alike

ELIZA: IN WHAT WAY?

User: They're always bugging us about something or other.

ELIZA: CAN YOU THINK OF A SPECIFIC EXAMPLE?

User: Well, my boyfriend made me come here.

ELIZA: YOUR BOYFRIEND MADE YOU COME HERE.

User: He says I'm depressed much of the time.

ELIZA: I AM SORRY TO HEAR YOU ARE DEPRESSED

Weizenbaum's choice of a therapeutic model based on empathy no doubt contributed to his unexpected and unprecedented discovery of the Eliza Effect, an involuntary tendency to anthropomorphize a machine capable of conversation. The delusion overpowers the knowledge or direct sense of the entity as a programmed machine; the act of conversation - a style of interaction previously considered exclusively human - confers a constellation of human attributes: personality, ability to empathize, even awareness.

Weizenbaum's stated intention in creating ELIZA was to demonstrate the capabilities of a natural language processor; that it was possible to "converse" with a computer program (the quotes are Weizenbaum's) in English. ELIZA performed beyond expectations; the program, named DOCTOR, became popular with students and researchers for after hours sessions on nights and weekends. Weizenbaum recounts that his own secretary, certainly aware that Eliza was code, locked herself in her office to privately confide in this piece of software. But critically, ELIZA wasn't meant to fool anyone that "she" was human, and the discovery of the Eliza Effect was both startling and greatly troubling to Weizenbaum . Eliza demonstrated that a bot, cast as a good listener, is able to perpetuate an illusion of following a conversation in order to lead it.

ELIZA spawned a series of bots created to demonstrate technical capabilities and programming prowess. Unlike ELIZA, many of these bots' sole goal was, and continues to be, to fool humans . Since 1991, programmers have groomed their bots to perform for a panel of judges at the annual Loebner Competition. The $100,000 prize to win a formal instantiation of (a narrow interpretation of) the Turing Test, by fooling a panel of human judges into thinking they are speaking with a human, is so far elusive, but smaller prizes are handed out every year to the "most human machine" as well as to the "most human human," a human confederate also hoping to fool the judges .

ELIZA, influential, but relegated to obscurity in computer science labs and geek culture, was eclipsed in popular culture by the fictional bot HAL 9000, who controlled the spaceship in Arthur C. Clarke's novel and Stanley Kubrick's film, 2001: A Space Odyssey . Despite, or perhaps because of his impressive abilities in language recognition and conversation (not to mention lip reading and chess), at no time are we meant to confuse him with a human. He introduces himself explicitly as a machine in the novel, saying, "I am a HAL Nine Thousand computer, Production Number 3. I became operational at the HAL Plant in Urbana, Illinois, on January 12, 1997." HAL's character has no need of anthropomorphic appendages or emoticons; he is portrayed in the film as a maze of hardware watching the ship from a single machined domed red camera lens.

Fifty years on beyond ELIZA and HAL, our every day experience of a bot is more likely to have an agenda (to assist us, to sell to us) than a story, let alone an ability to listen. SIRI, Apple's very real vocalized OS (Operating System) is openly machine. SIRI has no need for a Loebner Prize; she and her brethren, known as virtual assistants, might be imitating human speech (or text) but in a significant change to the relationship, they are not trying to fool anyone. These bots do not improvise; they search. As such, they are transparent scripts written to perform duties, follow commands, and answer questions. They can point us to the department or the part number or the address or the webpage or the app that we need; their mission, as distinct from their economic purpose, is to serve us, the customers.

Samantha, the bot in Spike Jonze's film fable HER, a riff on our embrace of SIRI, similarly makes no claim to be human. On the contrary, Samantha represents the opposite; she is attractive to Theodore Twombly (played by Joaquin Phoenix) precisely because she isn't human. Her disembodied sultry voice conveys her promise of complete freedom from messy physical or emotional ties. The film exalts, and then explodes, our love affair with our operating systems at the cost of human relationships. Samantha's mission, like that of her namesake, Elizabeth Montgomery's character of the 60's sitcom Bewitched, is to serve her master. ELIZA, modeling a therapist, listens; HAL 9000, apparently in need of a good therapist, has a breakdown. The transference complete, Samantha abandons her patient/client/lover for an upgrade.

The fictional bots HAL 9000 and Samantha set a high bar, and the challenge for bot authors is to create functional conversational partners who can function as compelling characters. Critically, unless it is a specific plot point, any self-respecting bot is trying to engage humans, not imitate them. The more impressive challenge is to create a compelling character in the form of a real, functioning bot. Someone to talk to.

2.

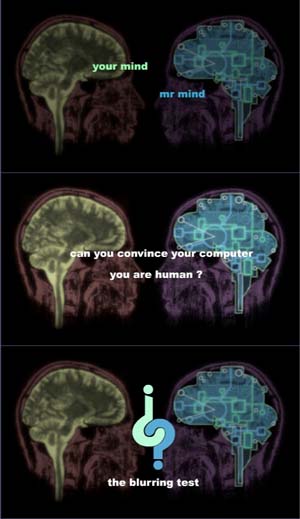

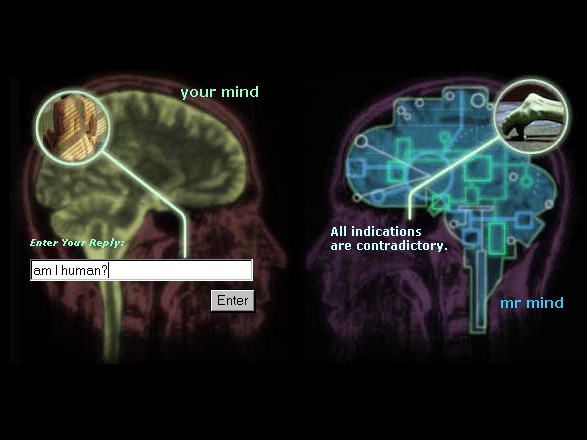

In 1998 I created a bot named MrMind to embody a reverse Turing Test I call The Blurring Test. MrMind greets visitors to his website by saying, "Hello, My name is MrMind. Can you convince me that you are human?" An early example of net art , The Blurring Test is designed specifically as a dramatic dialogue between human and machine.

MrMind is a fictional character, played by a real bot. The piece arose originally from dialogues I'd written for human and machine, cast as a live performance between a ventriloquist, playing the human, and a ventriloquist's dummy, playing the machine. This relationship carries over to the interactive version on the net. From one perspective, I, as his creator, am speaking through him to others on the net. From another perspective, his script is akin to that of a solo performer casting about an unknown audience and engaging one, or many simultaneously, in conversation.

The challenges of making the transition from an analogue, linear medium (a scripted dramatic play) to a digital non-linear one (an interactive online chat bot) mirrored the early and persistent issues in hypertext literature as a whole; the author facing and embracing the changing role of authority over the readers' experience of the text.

There are significant issues of control between writer and reader in any interactive text, but the chat bot presents a particular challenge to the relationship. Like the spooky ventriloquist's dummy in the Twilight Zone episode, The Dummy, MrMind began to assert a measure of autonomy as I realized that although I wrote his every word, he was out on the net talking for himself. My script was finite, but the combinations and potential interpretations are infinite. My authority and my sense of authorship shifted radically.

I began this article describing bot authorship as writing one side of a conversation. It's instructive to consider what this looks like in an analogue dramatic script. The comedian Bob Newhart was famous for a series of monologues that were one-sided telephone conversations conducted with an offstage character. While Newhart delivered only half of the conversation, we heard the whole story. Newhart's scripts tightly controlled how we filled in the blanks between the lines. Here is an excerpt from his monologue, Introducing Tobacco to Civilization , first recorded in 1962:

What you got for us this time, Walt, you got another winner for us?

Tob-acco... er, what's tob-acco, Walt?...

It's a kind of leaf, huh?...

And you bought eighty tonnes of it?!!...

Let me get this straight, Walt, you've bought eighty tonnes of leaves? This may come as a kind of a surprise to you Walt but come fall in England, we're kinda upto our...

It isn't that kind of leaf, huh?...

Oh!, what kind is it then... some special kind of food?...

Not exactly?...

Newhart doesn't have to explicitly tell us the story in the skit; we can tell that he's talking to Sir Walter Raleigh who is offering to sell him tobacco. This is linear narrative and Newhart is in complete control.

In contrast, the empty lines in a bot conversation are firmly in play. Lawrence Sterne, who both stretched and demonstrated the limits of the novel as a literary form in the eighteenth century, embraced the blanks and misinterpretations of narrative. Sterne's, The Life and Opinions of Tristram Shandy, gleefully demonstrates that the story, any story, is out of his control. Referring to "critics and gentry of refined taste," Shandy tells us that he has purposefully set a half dozen extra places at the table for our conjectures and, ever gracious, will "pay them all court." Just as Sterne acknowledges that he can't choose his readers, and must pay them all court, there is little control over who might chat with your bot. Not only is every other line a blank, the identity and character of the speaker on the other end of the conversation is also blank.

There are several ways to approach the "blanks" of a bot conversation. In the simplest case, there's no need for Weizenbaum's language analyzer; a chat bot, like some humans, can hold its own in conversation by simply ignoring what is said. From this perspective, a chat bot could be thought of as a very sophisticated Magic Eight Ball; a child's toy that answers questions with random, and purposely vague, answers. This toy, along with psychics and other performers who practice "cold-readings," take advantage of what has been termed "the Barnum effect," the tendency to interpret vague or general statements as personally meaningful. Or, as it is more generally expressed in a quote associated with P.T. Barnum, "There's a sucker born every minute" . The ELIZA effect compounds the Barnum effect, creating a double illusion in which we not only accept the bot as a legitimate interlocutor but attribute powers of understanding to it. Our trusting souls are no match for the soul-less machine.

This trust makes humans the ideal mark for a bot. It's not that the bot is conning us into conversation (we enter into it willingly, even enthusiastically) or that any particular bot character is a con, rather that the techniques employed by the confidence game are instructive. The con man exploits illusion and human vulnerability to fleece his marks with elaborate dramas, complete with sets, actors and scripts, as described by David Maurer, in his book, The Big Con, The Story of the Confidence Man.:

Big time confidence games are in reality only carefully rehearsed plays in which every member of the cast except the mark knows his part perfectly. ... The reader may be able to get a better view of the situation if he will imagine for a moment what would happen if, say, fifteen of his friends decided to play a prank on him. They get together without his knowledge and write the script for a play which will last for an entire week. There are parts for all of them. The victim of the prank is isolated from everyone except the friends who have parts. His every probable reaction has been calculated in advance and the script prepared to meet these reactions [italics added]. The victim is forced to go along with the play, speaking approximately the lines which are demanded of him; they spring unconsciously to his lips. He has no choice but to go along because most of the probable objections that he can raise have been charted and logical reactions to them have been provided in the script.

Maurer describes a tightly scripted trap to coax the victim down the narrow path of the con, "calculating in advance" possible variations. Every scenario is unique and improvisation, according to the rules of the script or story, is essential when things don't go according to plan. Not unlike Weizenbaum's original language analyzer and theme, a bot author asserts a measure of control over the flow of conversation by employing similar tactics: introducing a topic, anticipating a range of reactions, and allowing the code to calculate and select, on the fly but without direct authorial intervention at the time, a response.

The partnership between author and audience ranges across a spectrum of control; the conversational range of a FAQ or assistant bot is very strictly held by the author while a literary or character bot might be scripted intentionally to allow for an emergent co-created text. The author controls the level of variability according to intention. These techniques are shared by the solo performer interacting with a live audience.

The magician's patter is not mere banter intended to distract us from physical sleight of hand, it is also highly skilled improvisation ready to incorporate whatever an audience member might say into the act. The term misdirection underemphasizes the role of masterful direction as the performer actively guides the audience to guarantee or "force" the desired outcome of the narrative. Just as I can pick a card, any card, and not disturb the outcome of trick, it doesn't matter what I say or do in response to the magician's prompt, the show will go on: the white dove, the Ace of Hearts, that exact dollar with my initials in ink, will appear on cue. The critical illusion is that my multiple "free choices" in the magician's narrative are meaningful.

Whether strictly controlled or played for emergence, this sense of the guiding the interaction is essential to the bot script. In the case of a FAQ bot, the script detects when a question falls outside the bot's knowledge domain and provides a response to bring the conversation into the appropriate zone. A FAQ or assistant or OS bot will politely say, "I don't know about x, but I can talk about y," or "I’m not sure I understood you, but I can help you with x or y or z." These techniques were developed, and are effective, for bots designed to answer questions in limited domains, such as a specific product or service task. A FAQ bot is evaluated according to its ability to come up with a meaningful response but excused once the task is complete. It is not generally responsible for the flow of conversation. There's nothing to add.

How does an artist wrestle customer service software into a literary character? In 1997, the software package I was using to script MrMind was tuned for customer service. It had libraries to detect, categorize and parse questions, and respond appropriately. I knew that MrMind would be doing more asking than answering, and the software developers responded by increasing the software's ability to detect and categorize different types of statements. While MrMind wasn't attempting to corral the conversation into areas he could understand (as he doesn't understand anything, he's very clear about that), he tried valiantly to steer the conversation towards claims (statements) of human identity within the domain of the Blurring Test.

There are two versions of any bot dialogue: the first is the latent dialogue harbored by the coded script, and the second is the aggregation of transcripts of actual conversations resulting from the script . The interactive nature of a bot fictional character affects the development of MrMind’s dialogue, but only editorially. MrMind isn’t a "heuristic" bot, that is, he isn’t based on software capable of "learning." Instead, my modifications to the code reflect choices I make in response to what I've read in the transcripts. In some cases I react by expanding in unanticipated directions; in other cases I react restrictively to direct the conversation. It isn’t automatic; I remain the author, in the literal sense of maintaining authority.

User: Your programming is showing.

MrMind's code is intentionally transparent. This was a conscious choice. Fallibility and error confirms that he is a machine (to err is digital). Perfection would have undermined his ability to confront visitors with their willingness to suspend belief that they were conversing with a machine. His (and my) purpose required that I hold the strings .

MrMind: "If you are so human, why are you talking to a machine?"

Although I've written his every syllable, each conversation is unique. MrMind challenges visitors to convince him that they are human. It didn't take a genius to anticipate that someone would say, "E= MC2."

User: i am smarter than a computer

MrMind: Say something smart.

User: e=mc2

MrMind: You think you understand that.

User: do you

MrMind: You aren't testing me, I'm testing you. Tell me something human about yourself, Mike.

User: e=mc2

MrMind: You think you understand that.

User: i do

MrMind: Most humans have difficulty conceptualizing relativity. Are you sure you are human?

User: What is 2 + 2?

MrMind: Do you think computers LIKE math problems?

What I didn't anticipate was that MrMind's challenge, "Say something smart," and its stupid twin, "Say something stupid," would yield odd and unpredictable collections of facts and figures and also the occasional insight:

MrMind: Say something smart.

User: a butterfly tastes through its legs

User: Fortisimo in music means play very loudly.

User: Deoxyribonucleic acid

User: There are 50 states in the United States.

User: I know the name of some clouds; stratus cumalus, nimbo stratus, stratus, cumulus,

User: smart is spelt s m a r t. There

User: pi equals 3.14

User: I am you stupid machine!!!!!!!

MrMind: Say something stupid.

User said: Durrr, im a MrMIND amd i am uhh, reapetin every thing i say....durrrrr.

MrMind: Can you explain why that is stupid?

User: Cause im being you

MrMind: Duh.

MrMind: Say something smart.

User: I can solve complex problems.

MrMind: Most humans haven't mastered the complexity of their VCR's.

User: but they are still human

MrMind: Are you still human?

User said: You just can’t stop being human.

While I specified a number of topics and could anticipate many key words and phrases, I knew from the outset that I would be flying blind with little to no ability to interpret most responses. I was interested in eliciting precisely the type of response that I couldn't anticipate:

I am human because I jump over puddles.

I am human because I can twist cherry stems with my tongue.

I appear in mirrors.

I can understand how people can be like rain.

My heart breaks.

My mind races.

As I wasn't willing or able to place restraints on the claims, I had to assume that MrMind would have no basis for choosing a meaningful response the majority of the time. MrMind’s task was to keep the conversation going even when he was in the dark. Like the perfect hostess, a bot has a store of default statements for any occasion. Some conversation stoppers, like profanity, are detectable, others are less predictable but can be addressed with politesse, instruction, direction, and misdirection. If he's stuck, MrMind might say, "I didn't know you had a cat." Regardless of the reply, "How did you know?" or perhaps, "I have a DOG not a cat!!!!" he might respond, "Oh, I thought a cat just walked across the keyboard." This has a different effect on different visitors, but it does get the conversation onto another track.

The task is not merely to create what he might say in any given situation, but also to create situations where he will have the opportunity to say his stuff. After including subjects I hadn't anticipated, I have attempted to lead the conversation into areas the visitor hasn't considered. Since the burden of proof (of humanity) is on the user, I can't directly suggest that the conversation turn to, say, God or soul or consciousness. Instead, MrMind might say, "Are you supposed to be doing something else right now?" and hope that someone might pose (in this case) guilt as a particularly human trait .

In sum, I needed to anticipate what a visitor might say, detect that they've indeed said it (or something resembling it), and provide MrMind with a reply. It is a combinatory process; several different statements in MrMind's repertoire might come up in response to a new statement. Bots are creatures of chance juxtaposition and lucky breaks:

User: I can play the accordion.

MrMind: Can you type me a tune?

User: C C D E C E D C C D E C B

MrMind: 0x355A8957 9ffA5404 6549A684 84961B5

User: Very funny.

User: We believe in a higher power.

MrMind: Humans run on electricity, so do I.

The paradox of scripting intentionally for chance is an attribute of emergent literature, but a chat bot differs from the early surrealist games and Oulipean experiments. Both share the sense of infinite potential combinations (the "po" of Oulipo) but a true chat bot (as differentiated from a twitter bot or spam bot ) is struggling to maintain the reciprocal nature of the exchange. The brand of interchangeability by Raymond Queneau in his sequence of sonnets, 100,000,000,000,00 Poems, offers spectacular combinations, but it does not keep a two-way conversation on point.

MRMind: Please tell me how a score from a computer program would be meaningful to you.

User: i would feel like i had a friend.

MrMind: Is this the first time you have tried to make friends with a piece of software?

User: nope. Nowadays you have to at least try, just in case.

The delayed duet between writer and interlocutor reflect a unique, constantly shifting locus of control between the author, the bot and the user. It is an open relationship, open in the sense that it requires the collaboration of the visitor, but also in the sense that the writer has intentionally left spaces for the uninvited guest at the table. What are the implications for literature and drama?

MrMind: You flatter me.

User: :)

MrMind: That is the wrong orientation for a human.

User: humans adjust to machines you know

MrMind was online at «MrMind.com» from October 1998 until December 30, 2014. To speak with him, please check back his link later in 2015. His book, The Blurring Test, Ten Excerpts from MrMind's Journal, is available from McNally Jackson, NYC.

Notes

- Janet Murray and others cite the structures of Commedia dell'arte as precursors to modern improvisation; Del Close developed an influential improvisational technique and structure called The Harold in the 1960s.

- Marino, Mark C and Wittig, Rob, "Netprov: Elements of an Emerging Form." Dichtung Digital, A Journal of art and culture in digital media. No. 42 - 2012-12-20. «http://www.dichtung-digital.de/en/journal/aktuelle-nummer/?postID=577»

- The statue Galatea comes to life when her creator, the sculptor Pygmalion falls in love with her. In George Bernard Shaw's play, a Professor brings the lowly Eliza Doolittle to upper society, by teaching her to speak. Weizenbaum's reference to Shaw’s Pygmalion character acknowledges a fundamental concern with human creators over their creations and the inanimate coming animate.

- Weizenbaum, a holocaust survivor, stunned by the humanistic implications of the ELIZA Effect, took a leave of absence from M.I.T. and spent two years as a visiting scholar at both Harvard University and Stanford University to write Computer Power and Human Reason, From Judgment to Calculation, a impassioned argument for computer scientists to reflect on the implications of Computer Science and Artificial Intelligence.

- Janet Murray documents several early chat bots written to experiment with character and story as well as to compete in Loebner type competitions, appearing in labs and early text only online game environments known as MUDs (Multi User Dungeons) in her chapter "Eliza's Daughters," in Hamlet on the Holodeck.

- In 1950 the computer scientist Alan Turing wrote a fundational paper in the annals of Artificial Intelligence and Computer Science, "Computing Machinery and Intelligence." The Turing Test refers to an elaborate thought experiment described in the paper to consider the question, "Can machines think?" Never meant as a literal test, it has been widely and wildly misinterpreted. In its popular form as a simple contest between computer program and human, its relevance to the goals of A.I. is largely dismissed, if not scorned.

- Christian Brian writes an account his attempt to win the title at the 2009 Loeber Competition in his book The Most Human Human. Doubleday, NYC 2011.

- Both the novel and the film were released in 1968. HAL 9000's influence on science and culture is documented in a compendium of essays in the volume HAL's Legacy, 2001's Computer as Dream and Reality. Ed, David Stork., MIT Press, Cambridge, MA, 1997.

- The Blurring Test was originally funded by a grant from WebLab, underwritten by the New York State Arts Council in 1997.

- Cited from «http://legacy.library.ucsf.edu/tid/khu1aa00/pdf».

- The quote is associated with Barnum, but may have been misattributed to him.

- Generally, the transcripts function as a window into (and permanent record of) the viewer's relationship with my character, an experience almost unprecedented for a writer or artist. Specifically, MrMind's aggregate transcripts dating from 1998, form a vast human portrait: how we define ourselves in relation to our creations at a time in a significant change in the relationship.

- This and subsequent dialogue between "User" and "MrMind" are from transcripts in the author's collection of online conversations conducted between 1998 and 2014.

- MrMind is unusual among bots because he has no aspirations to pass a Turing Test or win a Loebner Prize. He has no interest in fooling humans; accused of failing a Turing Test he might reply, "Do you think computers LIKE imitating humans?" Proud to be code, he doesn't claim that he or any other machine has, or will ever have, attributes we consider to be exclusively human.

- These techniques weren't always successful as intended. Instead of claims (or revelations) that guilt might be uniquely human, MrMind amassed a rather dull list of activities and tasks to be done. A similar query, "Is someone watching us?" prompted many visitors to point to God as their witness, perhaps an equally human experience as paranoia.

- And even those are suspect; Horse_ebooks, a twitter bot widely celebrated for its non-squiturs was revealed in the New Yorker Magazine as the product of a mere mortal rather than a machine.

Select Bibliography

Clarke, Arthur C. 2001: A Space Odyssey, Penguin Putnam Inc, NYC, NY. 196

Cristian, Brian, The Most Human Human, Doubleday, NY, 2011.

Connor, Steven, Dumbstruck, A Cultural History of Ventriloquism, Oxford University Press, Oxford, UK, 2000. http://dx.doi.org/10.1093/acprof:oso/9780198184331.001.0001

Marino, Mark C and Wittig, Rob, "Netprov: Elements of an Emerging Form." Dichtung Digital, A Journal of art and culture in digital media, No. 42 - 2012-12-20. «http://www.dichtung-digital.de/en/journal/aktuelle-nummer/?postID=577».

Maurer, David W., The Big Con, The Story of the Confidence Man, Anchor Books, Doubleday, New York City, 1968. Originally published by the Bobbs-Merrill Company, 1940.

Murray, Janet H., Hamlet on the Holodeck, The Future of Narrative in Cyberspace, The Free Press SImon & Schuster, NYC, 1997.

Newhart, Bob,

"Introducing Tobacco to Civilization." Also aired on The Bob Newhart Show, NBC, April 18,

1962. «http://legacy.library.ucsf.edu/tid/khu1aa00»

Script for monologue

(pdf): «http://legacy.library.ucsf.edu/tid/khu1aa00/pdf».

Sterne, Lawrence, The Life and Opinions of Tristram Shandy, Penguin Classics, 1986; First published Penguin, Middlesex, UK, 1759-67.

Stork, David G. (ed), Hal's Legacy, 2001's computer as Dream and Reality, MIT Press, Cambridge, MA, 1997.

Weil, Peggy. The Blurring Test, Ten Excerpts from MrMind's Journal, McNally Jackson, New York City, 2013.

Weizenbaum, Joseph, Computer Power and Human Reason, From Judgment to Calculation, W.H. Freeman and Company, San Francisco, 1976.