Hyperrhiz 17

Future Fiction Storytelling Machines

Caitlin Fisher

York University

Citation: Fisher, Caitlin. “Future Fiction Storytelling Machines.” Hyperrhiz: New Media Cultures, no. 17, 2017. doi:10.20415/hyp/017.e01

For twenty years my research and creative practice have been in the area of electronic literatures, broadly conceived. Here I look back, both through my own work and formation, and through the trends I’ve observed in our field, both as a maker and as a theorist, in order to engage in some speculation as to what might be next. I hope that some of the future fictions whose contours I see, if imperfectly, and some of the works I’d like to show along the way might resonate with some of your own ideas and practices and possible futures.

I direct an art/science lab — I guess we now call it a STEAM or tinquiry lab — and my path to the field of electronic literature work is circuitous. In spite of — because of — my doctoral training in social and political thought. But I do have experience in thinking about the ways in which new technologies might allow us to tell stories differently — and how emerging literacies and expressive tools might affect the kinds of stories we want to engage and for how long and where.

Twenty years ago I worked in the area of hypermedia and my early research was deeply invested in linking structures and the epistemological challenge link-node constellations might pose to academic writing — and the possibilities they might open up. I loved the idea of a text with multiple points of entry and many pathways breaking the philosophical line, and knowledge domain visualizations giving us new ways to communicate argument as well as structure, both the politics and the poetry of sculpting with data.

From there it wasn’t far to imagining the possibilities of using digital tools to create new kinds of narratives outside of my more scholarly work. I began to experiment with hypertext fictions and poetry, trying to understand new grammars and possibilities — working at the interface to explore the relationship between tools and content. My creative and academic work veered toward digital poetics.

And down at Georgia Tech, computer vision scientists were working alongside theorists and writers and designers to create easy-to-use software for use with augmented reality, at that time an emerging technology. I fell in love with the idea of AR narratives, and organized a research program around augmented reality and storytelling guided by the premise that we live in a culture with the unprecedented capacity to bring together the physical and the virtual, to transform our relationships to objects and landscapes through the addition of computer-generated information, to create interactive narratives and spatialized storyworlds and that it was important to make and think alongside these objects to better understand what constitutes a successful, compelling and emotionally rich experience in such environments.

And then I got a room. For a long while the room was empty. Later it was filled with tracking devices. The room was a pretty terrifying puzzle: I was supposed to make stories in it. Stories people might want to engage while inside it.

And I felt little in my formal or informal training had equipped me for creating room-shaped narratives. I kicked myself that I hadn’t studied theatre or circus arts or soundwalks. I had to think about point of view, and what came after point-of-view editing, and plot (what plot? How?) and closure and ways to travel a story, or move a reader through it, all over again. It was terrifying and thrilling.

This paper is essentially about some discoveries I made resisting and writing narratives in and for that room and beyond it, and some predictions I’m going to make for future fictions based on my understanding of reading and writing and crafting narratives at the intersection of emerging technologies and interfaces. I tend to avoid being a futurist and I definitely see there are multiple paths: a proliferation of possible futures and that my vision is necessarily partial. Nevertheless, here I go ;) I’m also going to give you just a bit of a show and tell along the way, focusing on works my students and I have created in the Augmented reality Lab at York University, especially for those of you who don’t have ready touchstones for some of these kinds of pieces.

*

But let me begin my argument for the future by stepping back again for a selective, skeletal history. Unlike my experience with the room, when I first faced the computer screen as a graduate student and explored hypermedia, I felt like I had mad translatable skills. I seemed to have everything that I could have on the printed page — and more. Hypermedia practice resonated strongly with many early and experimental forms and dreams with which I was familiar and I read hypermedia against storyquilting and femmage and shoebox archives as well as more mainstreamed practices.

At the time I started working in hypermedia, Storyspace was taking off — and it was a technology formed by people working deeply at the intersection of computer science and literature — basically dream software created by English PhDs who thought about stories and the way they were crafted in a way that appealed to my own sensibilities. Storyspace, as the name suggests, organized text (and, less elegantly some rich media) spatially. It had sophisticated guardfields, sticky pathways, collaborative potential and identical reading and writing environment.

It’s a well-rehearsed argument that what came next — namely Mosaic and HTML — probably set back electronic literature by 20 years. We only are now at the point were we are thinking again about the kind of sophisticated tech-enabled narratives that were probably available to us in the mid-90s.

One of the things that happened, too, in that initial movement from page to screen, whether the spatial narrative of Storyspace or the large linking potential of HTML, was that stories got bigger. Potentially vast. They certainly didn’t always get better…maybe they never got better. But, in general, the digital canvas was understood as being fairly limitless and that this feature was worth exploring. There were fewer thoughts about diminishing returns. (And I say this kindly as someone who once crafted a piece with 17,000 links.)

Theorists of electronic literatures at that time, probably some of you in this audience, talked about different ways of following links and how we might leave breadcrumbs for others, what it meant to engage a text with no last page or final frame, how to create narrative tension, how appreciating the structure of the work might signal the end of reading it, and, of course, the pleasure of re-reading, a practice certainly not unique to hypertext, but an easy act for the linking author to encourage or mandate, urging pathways to collide at clusters of nodes, willing the reader to see the passage so very differently, and perhaps be changed, the next time around.

And I suppose enough time has passed now that I can let you in on the inside joke from a conference hallway many years ago. Twenty of us in that hallway, a quick straw poll revealing that we had collectively written about 25 hypertext works that year and had collectively read two, begging the question of the pleasures of writing hypertext narratives as opposed to reading them, and what it means to engage with expressive authoring tools…but bracket that for a second.

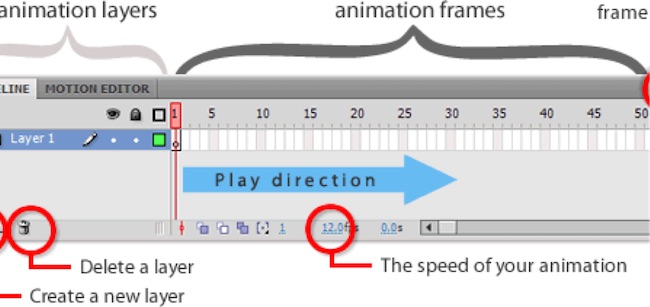

Flash forward again a few more years as textual writing gives way to full-fledged hypermedia — one of the things that encourages this is better bandwidth, of course: moving images are now possible. Not full-out video at this point, but certainly vector animation.

And so I’m teaching Flash to students and “the machines works on our thoughts” as the stories move from the spatialized canvas to the timeline, following the demands of the software. A different kind of story is created when you must set it on a line. Flash was also fairly tedious to craft so it favored the one or two minute story, at least for beginners. These are the Vines of e-lit: where the neverending books — the books without end, as Robert Coover identified them at the turn of the century, become almost by necessity so much shorter — favoring the poem or the puzzle game. Not the long form. Favoring the cinematic. I try to introduce Storyspace to the Flash students and everyone wants to hit play and make something happen! The elegance of the dual reading/writing environment is understood as a liability: all that coding for no moving image payoff. And voiceover replaces the written text.

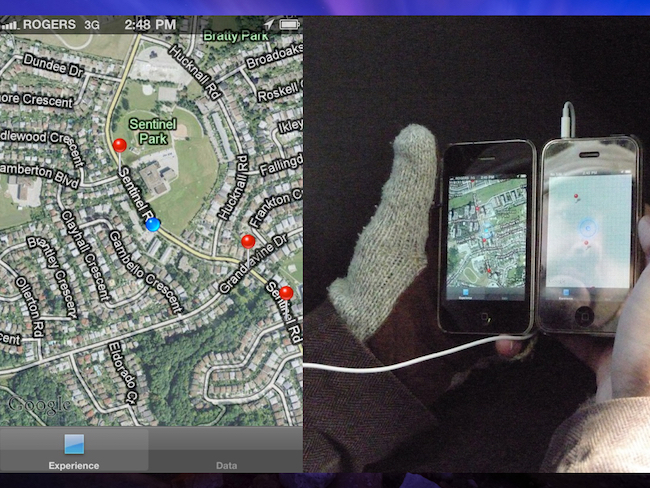

Forward a few more years: spatialized storytelling re-emerges in the form of stories located in the physical world in which the interface of the story is you moving through the world holding your GPS-enabled device. These are locative media stories, the mobile media narratives of the early and mid-2000s. For those of us in places like Ottawa or New York or Chicago or Stockholm our hands are freezing; night falls fast. We’re hoping, as supervisors of these projects, that there are only ten nodes and my face falls when I realize that I’ve agreed to be the external examiner for a mobile media narrative with thirty nodes across two football fields. Damn.

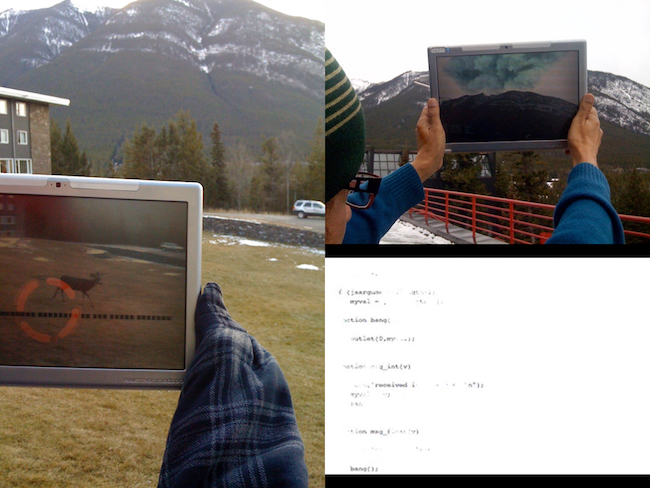

And the technology’s not quite there. You keep running back and forth across these hotspots in search of the narrative line. ANY narrative line. Also, sometimes, any media at all. And the distances are so great — the hotspots so necessarily large, we’re certainly not back to the beautiful looping structures of reading and re-reading we found on desktop screens, being a different person having passed these nodes before. And, later, for the early augmented reality mobile works, even daylight is the enemy.

There are a number of excellent experiments at this time — the early to mid 2000s — Mobile Bristol, comes to mind, and a gorgeous vision for ubiquitous poetry out of Barcelona that promised to return guardfields so that you might walk the Ramblas knowing that different poems would be served up at different times, based on where you had walked before. But I never heard of a fully realized work. Tellingly, I can’t even remember the name of the software now.

*

Grant money begins to flow to research projects that combine mobile media and augmented reality — not so much to literature, though we siphon some off…mostly to digital humanities and STEM disciplines — a lot of the DH work involves historical sites and is less about new stories for new screens than mimetic recreation, in the tradition of 3D modeling from blueprints for virtual reality.

My own work in augmented reality emerges from the perspective that it’s way too much labor to create a 3D chair perfectly registered in the real world when I can just move an analog chair to that same spot. AR is already the far more theoretically interesting but uglier relation to virtual reality in terms of production values and I’m less about fooling the senses than trying to make the world more surprising. I think a lot about what it means to have haunted objects telling stories, and love how the analog and the digital work together in the AR storytelling machine.

Augmented reality is poised to become a one hundred billion dollar industry not because it makes us catch our breath the way some virtual reality environments do but, rather, because of the ways in which the real world matters in these works — to serving up context-specific advertising, absolutely, but also because, critically for us, the physical world is co-constitutive of the meaning of a work.

I think a LOT about what it means to have AR narratives set in libraries or in historically important neighborhoods that rely on an a reader’s understanding of librariness or place or politics. The location can carry so much of the weight of the narrative, making AR fiction more like film or immersive theatre than the book. This is part of the new toolkit.

But for the most part, when we think about the ideal granularity and scale of these kinds of electronic fictions, it seems reasonable to turn our backs on vast narratives. The set-up favors the whispered secret, the one-paragraph text. No one wants to stand still in a spot for ten minutes. We can’t even stand a hundred delicious secrets because we can’t hold that iPad up in front of us for very long, even if we love the poem. Would you like to walk holding your iPad in front of you for an hour? The majority of these experiments are short, for all sorts of good reasons and we spend little time in them.

*

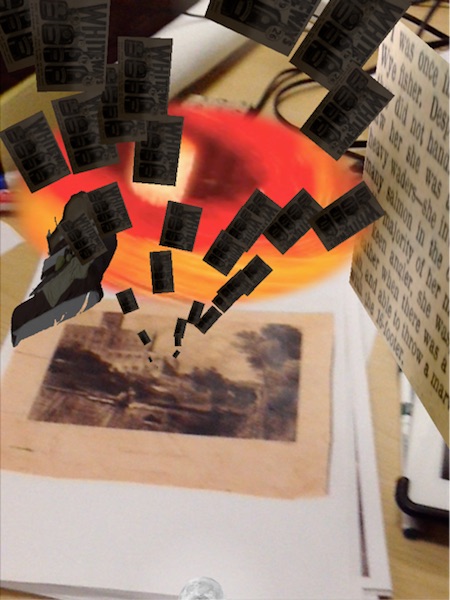

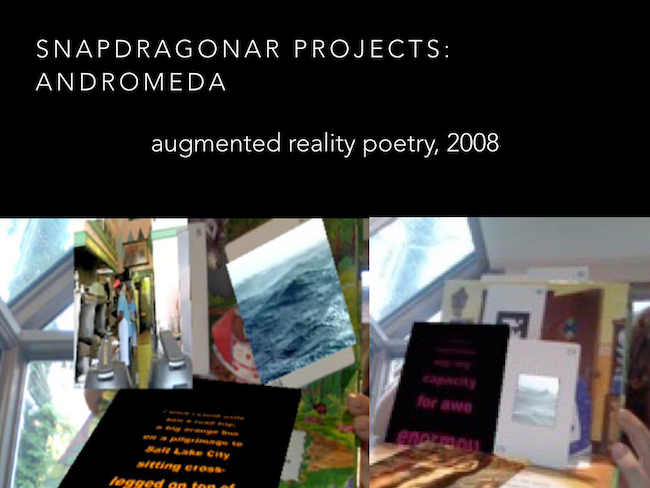

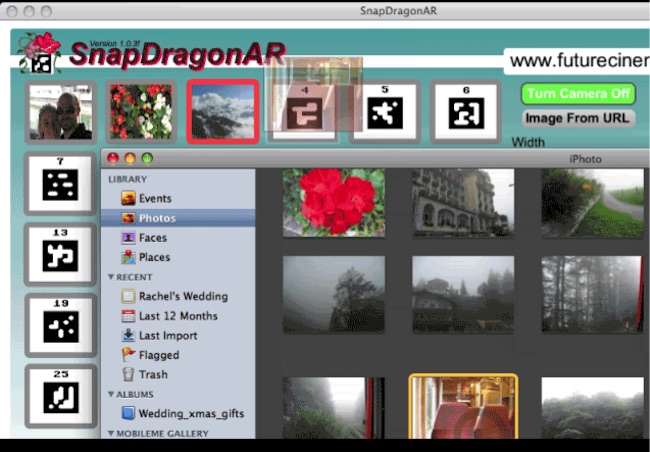

As my tools were still in their intermediary form, I still resisted writing for that room and resisted mobile media. I made small worlds: haunted cabinets, first-personal confessionals, treasure boxes, book objects as my practice evolved from fiducial — or marker-based AR — to natural feature detection. Andromeda (2008), was an augmented reality poem about stars, loss and women named Isobel that I made by overlaying a dollar store pop-up book with a small poem series, activated with a custom software solution called Snapdragon that we built in the lab.

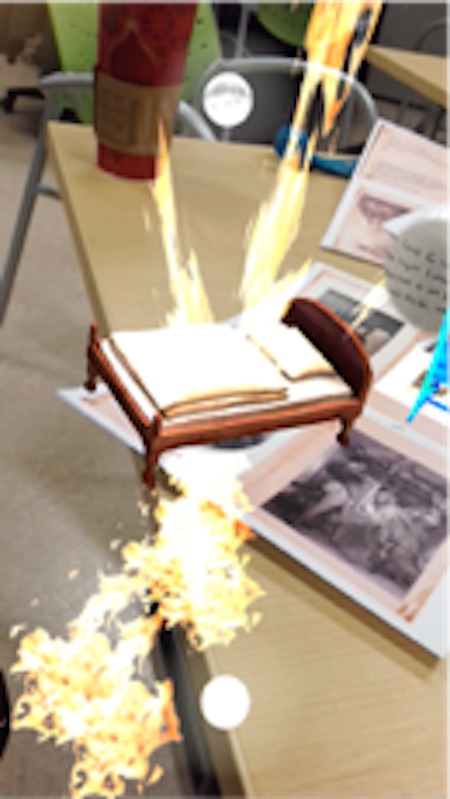

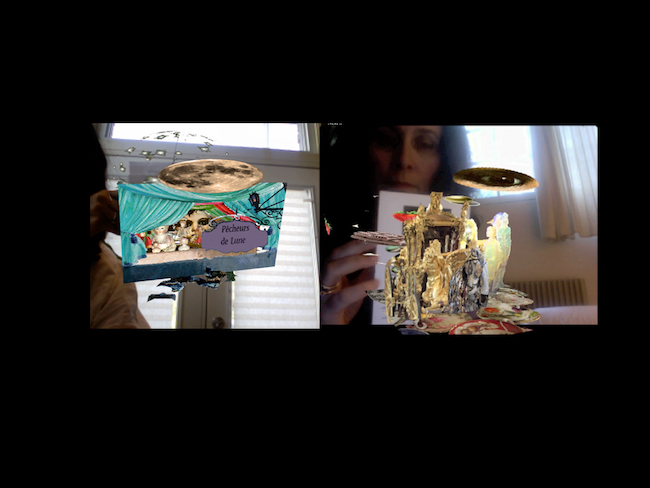

An early iteration of Circle, an augmented reality tabletop theatre piece that tells the story of four generations of women through a series of small, connected stories, used that same custom marker tracking system and the user interacted with the piece by exploring the markers with a webcam, triggering small poetic voiceovers and videos. A later version was built in Unity game engine and used natural feature tracking Ñ the black and white markers of the earlier version now replaced by objects and photos. In the later version, the user interacts with the piece by holding up an iPad or smartphone as a magic looking glass to explore the story world.

My students and I also made web-based flash-AR in this period: not just the stories or the poems, but also story architectures so that people could re-use our code and make their own web-based AR for free and with no coding skills. The prototype for this experiment was a poem called Requiem — a multi-stanza poem in which each stanza is its own visual world…but the underlying code for each of these visually distinct scenes is identical.

Web-based worked forced a relentless linearity on the pieces but I also did experiment with a spatial arrangement by making AR theaters and treasure chests — I also collaborated on documentary work with historians to craft never-before-told stories of the Underground Railroad for schoolchildren.

And I collaborated with vision scientists to build drag-and-drop expressive tools so even my children could use augmented reality and help to establish new conventions for what was still a wide-open form.

Sure, we sent our recreation of the Labyrinth Pavilion from Expo 67 into the immersive tracking system — the Room — but our most popular variant of the project was a tabletop version accessed via iPad rather than the heavy, tethered optical see-through display. My lab tended toward the miniature even as recently as 2 or 3 years ago.

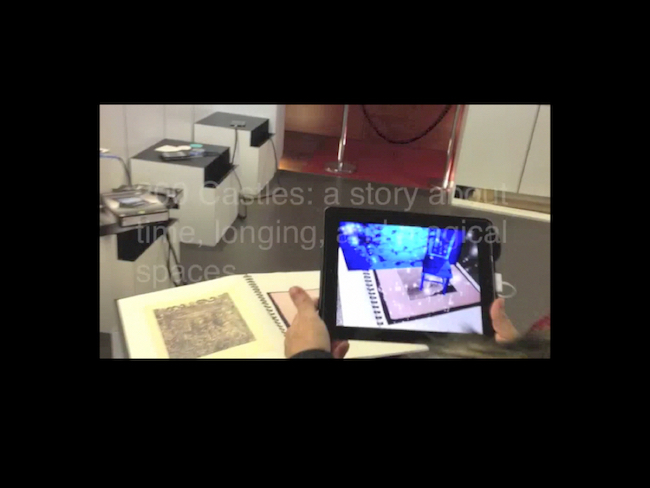

One of my most bookish works is an interactive, AR pieced called 200 Castles, a spatialized series of small stories set in both the domestic spaces of a castle and in the spaces of memory. The viewer unlocks the story by using the iPad as a magic looking glass to look at a series/book of old illustrations — a PDF with the required images. When the iPad’s camera “sees” the photo, the augmented reality technology overlays a series of small digital scenes suggesting the co-existence of multiple decades and triggering subtly interlocking stories of longing, archiving, sex, regret and ruins. (And a small mystery hidden in the old newspaper footage embedded in the project that no one engages.)

This was a deliberate homage to the print book both owing to its exhibition location — the Bibliothèque Nationale in Paris — and to the fact that what I felt was a far more interesting interface — a miniature chateau that allowed the reader to explore room and gardens and, by extension, story fragments, in any order using the touch function of the iPad or phone — was not intelligible to viewers: no one knew how to engage with that version of the piece.

*

So — small stories, handheld poetry. But here’s something else we know: audiences do exist for long-form digital narrative, even long-form interactive. People can spend thirty hours inside a game world, after all. Thirty hours binging Netflix.

In another area of the digital world, I’m struck by the number of fan fictions being written online in serial installments, mostly, if the comments can be believed, by young women. It’s not at all unheard of for a writer to post every Sunday night for two years. Think of a 17-year-old writing something for two years. Think of her subscribers.

And look at Tumblr roleplays in which 14-year-old girls finishing their O-levels are also mobilizing a cast of 12 for a branching narrative roleplay, casting it and producing what is essentially a multi-branching, collaborative novella on the fly in about 12 hours. For what it’s worth, some of those ephemeral threads I catch and am able to follow seem better along almost every dimension than a fully funded multi-year collaborative writing project with which I was associated only a decade before, the writing here energetic and multi-threaded and fast: and the pleasure of reading and writing and acting and branching and being electric together, sometimes just this side of synchronous, is obvious.

These examples contradict what I’d come to accept as trending about granularity and scale in digital narratives. Also duration and audience attention.

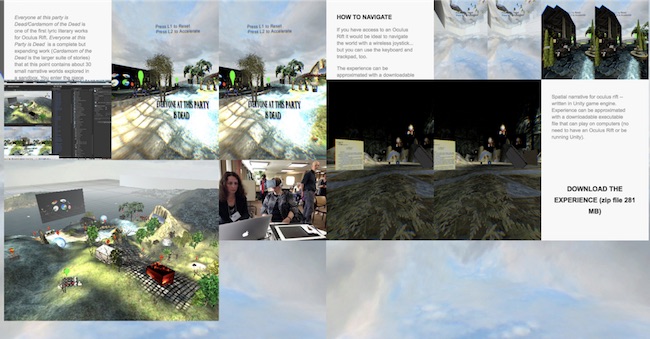

I started to work at a larger scale. Enabled by the wide-open canvas of the Unity game engine, inspired by the personal virtual reality environment of the Oculus rift…and emboldened by audience hunger for long-form narratives. One of my recent works is entitled Everyone at this party is Dead/Cardamom of the Dead and it’s one of the first literary works for the Rift. It is an expanding work that at this point contains about 30 small narrative worlds explored in a sandbox. You enter the piece standing at the edge of a island and in the middle of a soundscape of a party taking place, with guests being named: these were the guests of my 21st birthday and they are now all dead. What follows is a fictionalized narrative, at times semi-autobiographical, at other times entirely made-up. You are urged to explore houses and stones and artefacts spread across the terrain of the island at skewed scales — like a dreamscape. Addressable objects are signaled by tear-shaped signposting and will propel you into a different environment in order to access and bring to light three longer narratives of the dead woven through the work, via both the content and the linking structure: 1) a story of a sudden illness and euthanasia 2) a coming-of-age story relating to a murder; and 3) a meta-theme of collecting as a consoling practice. In filmic terms the piece probably had a run-time of about four hours. In practice, people could engage with the work for about four minutes without becoming nauseous.

I still believe it to be mostly true that screen-based electronic literature is less likely to be engaged as book than gallery object, perhaps especially when we share these in public spaces. Or maybe it’s that the works themselves are their own trailers. Even the award-winning Pry, Samantha Gorman’s stunning work for iPad, held the attention of conference attendees the Future of Storytelling conference in New York for about 60 seconds each — my very unscientific study as I ate a sandwich in the corner of the exhibition space. Of course a showcase like that doesn’t favor four-hour engagement. But surely it is teaching us, at some level, to treat works on screen as conceptual art, to scan for design and HCI; for code, rather than narrative.

And that’s certainly another fascinating way this is going. I tend to be a lyric outlier at many events where the story-making machine — the code and instructions for its use — is the art. Algorithmically-generated texts that signal that one of the futures of narrative is not narrative at all.

I tend not to do that kind of work, but my one sweet spot there relates to my work in visualization. I’m fascinated by the ways in which software originally created to maximize business value might also be a powerful tool for crafting computer-generated works in which I do see the potential for narrative…. Emerging practices like content analytics and sentiment analytics analyze vast archives, large quantities of unstructured data sources as well as non-textual and other non-traditional forms of datasets. This is the same kind of software that powered IBM’s Watson, the computer that defeated the human Jeopardy champions. It can also help us to uncover insights gleaned from our own lifelogging — our hundreds of thousands of emails, our ephemera, our health data, our Instagram, all of our archives: This will be one future of memoir. And with a bit of tweaking under the hood, or through combining lifelogs of more than one person, or deliberate contamination of the archive, it could also be a fiction-generator. It also recalls for me Mark Bernstein’s ideas about sculptural hypertext, characterized by the “removal of links rather than by adding links to an initially unlinked text,” with sculptural hypertext being the possible editing mode for works generated through data analytics.

And on that note of everything old being new again, I’m going to finish by telling you about returning to writing AR narratives for that room and thinking beyond it, and making a case for a future fiction storyworld. Here goes:

My vision for this one possible future of narrative is ambitious in scope, with games as a haunting: what I think comes next are spatialized AR fictional storyworlds to be explored over days or weeks, not hours, with a granularity and density of text, then, that has not yet seen in in situ or mobile works. Future AR fictions will be vast like early hypertext, they will have guard fields like Storyspace, they will be persistent and ubiquitous, they will unfold over days, they will rely on contextual and real-time data, they will pull stories from the surroundings, they will be supported by hardware that doesn’t leave our arms sore or our hands cold and they will allow that one of the great pleasures of this kind of text is in making. The reading and writing environment at the level of interface will be similar, allowing for networked, real-time collaboration like online role play. It is potentially a living, growing narrative, with the promise of the unfinished gorgeousness of a book without end.

My research and experiments to make some of the first, full scale augmented reality spatialized long-form storyworlds accessed using next-generation AR glasses is part of a larger collaborative research project with Dr. Steve Mann, entitled “Augmented reality glass: sousveillance, wearable computing and new literary forms.” Steve Mann is widely understood to be “the father” of both augmented reality and wearable computing and a computer science pioneer who has developed the next generation of ARGlass head-mounted-display technology. Indeed, Mann’s Digital Eye Glass Laboratory (Glass Lab) is at the epicenter of innovation. He has been called a “modern day Leonardo Da Vinci.”

What difference might AR hardware make to literary creation in this medium? A big difference. Although even this version of AR glass — Meta Spaceglass — is an intermediate form…it’s easier to think past the HCI nightmare of AR via smartphones. Better field of vision will enable better registration, moving beyond a postage stamp image in the corner of your eyes. More importantly we can use our translatable skills: I missed what I first loved about hypertext and was sad to give up the dream of a persistent storyworld; a dreamlike, filmic palimpsest. And better hardware allows us to take advantage of other technological advances: the very small chunks of information and fleeting, easily intelligible experiences are so at odds with the incredible density of information that is now possible to build into these experiences.

The dream is that this hardware will be networked: we can share this storyworld and inhabit it in community, though we needn’t. The author, if indeed there is a single author, might even allow us to change it. We could maybe play within it, creating our own AU version of the story. Meta spaceglass allows the wearer to sculpt and build while wearing the HMD. I can sculpt something in air and send it to you and you can send it back, changed. This is a huge technical feat, and a minor example, but think about that as a potential tool for storytelling.

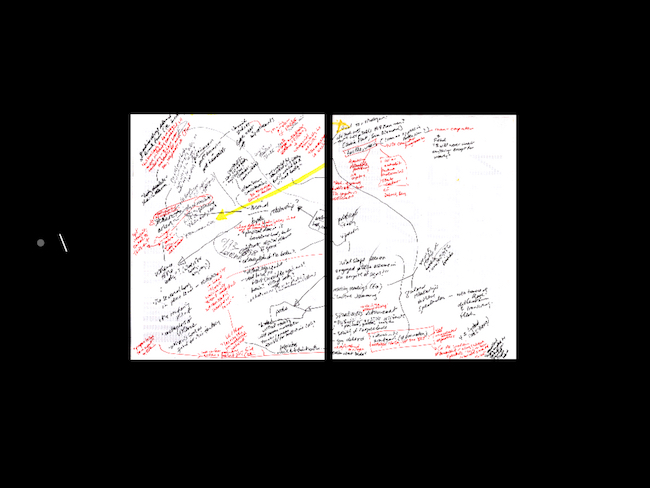

Leaving aside for a second the idea of networked and collaborative narratives, the first large scale mobile narratives AR projects we’re imagining, dense literary dreamscapes to be revisited over many days, are made possible both by new technologies and new practices of experiencing stories through technologies. We will make something with the density of a novel alongside the rich linkages and possibilities for rereading promised by hypertext combined with the potent poetics of the interplay between real and fictional worlds and the bodies walking through them. Through a series of 2000 interconnected “pages” or lexias or nodes, crossing dozens of city blocks and designed to be explored over time: ideally, and in contrast to currently available experiences, leisurely over many weeks rather than days. The story nodes are woven through and to each other in multiple ways and aligned with real world referents telling a complex, shifting, story of stalking, sex and loss, hoarding, a shrink machine responsive to the viewer’s touch and eccentric mornings that pull into evenings in an out-of-the-ordinary place: your neighborhood. It also plays with multiple POVs in a way that resonates with what ARGlass does so superbly — act as a mediating eye. The piece will initially be coded for Toronto but it is designed to be the kind of city-story that could be superimposed in many urban locations. The piece is a character-driven fictional palimpsest and the nature of reading a spatialized fiction means that a reader is unlikely to encounter the same story twice in the same way and that each engagement with the piece will be very different. This is in part because a reader can choose to visit/read/view the sections of the piece in any order and an author can weave through a variety of favorite, persuasive paths. But there are also particular aspects of electronic literature in augmented reality that come into play: for example, through coding I may make it impossible for the reader to return to a character or decade, once it has been encountered. It is also possible to serve up new context based on time of day or the number of hours already spent with the piece. The challenge, of course, is to create a sustained storyworld with sufficient narrative strength and cohesion to take effective advantage of the enormous possibilities of this emerging genre and the novel possibilities posed by Mann’s breakthrough in AR Hardware development.

The storyworlds we are now creating as part of the project will be among the first fictions written for and iteratively with meta spaceglass and, as such, will not simply be future fictions, but also texts to think alongside, objects to help us theorize both the hardware and software as expressive tools, the changing viewing situation, the evolution of this technology, the translatable skills and literacies needed to build and understand these texts and environments and texts that will allow us to consider what is at stake in the power of experiencing the world this way, and using ARGlass to see and be seen.

For Steve Mann the most interesting narrative is less the one you imagine than the one you inhabit. This kind of hardware also enables us to watch watchers, to visualize surveillance. He coined the term souveillance to capture this relation of looking and has been working to document via photography some of what meta spaceglass will allow us to see and proposes a new kind of AR theater that exploits this new vision.

As for me, now, as always, I’m interested in using AR to make the world a little more magical and working alongside my students and associates to invent, design, build, and deploy next-generation AR technologies and to imagine compelling narrative practices in this medium. The industrial concept videos promoting AR suggest a pretty instrumentalized world. But you’re catching me in an optimistic moment, one which has me thinking that a compelling narrative overlay on the real world will find an audience and predicting a breakthrough moment for a this no-longer-new technology, a future that I think aligns quite brilliantly with narrative: AR as both expressive and receptive future fiction storytelling machine that carries inside it some of the foundational dreams of electronic literature.

Notes

- Resonant with this observation, I find it striking that Scott Rettberg’s electronic literature visualization project suggests that even the field’s most influential elit texts have fewer than a hundred critical citations - most have about five. (https://vimeo.com/96769524)