Experiencing in pieces kit index

Experiencing in Pieces: A Physical Computing Approach to Sonic Composition

Steven Smith

North Carolina State University

Jeremiah Roberts

North Carolina State University

Critical Essay: Sonically Composing Experiencing in Pieces

Critical Essay: Sonically Composing Experiencing in Pieces

Introduction

In this critical essay, we first introduce the multimedia installation Experiencing in Pieces followed by a brief overview of the conventions of sonic compositions proposed by Steph Ceraso and Kati Fargo Ahern. We then shift our attention to explore the working relationship between embodiment and virtuality in light of physical computing technologies, specifically the Microsoft Kinect v2, and the affordances these technologies bring to sonic compositions. Thus, this critical essay, along with the entirety of this kit, offers readers a means to recreate their own Experiencing in Pieces installation and highlight the potential that physical computing technologies may have on projects that utilize the body and spatiality to create meaningful experiences for audiences or individuals.

Experiencing Sound: Technical Implementation

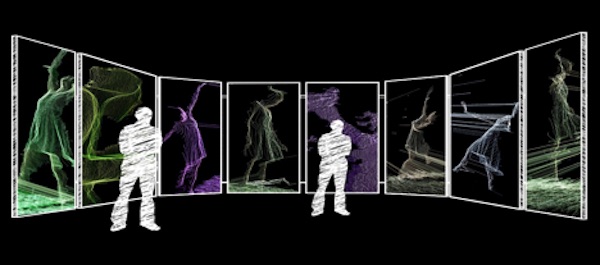

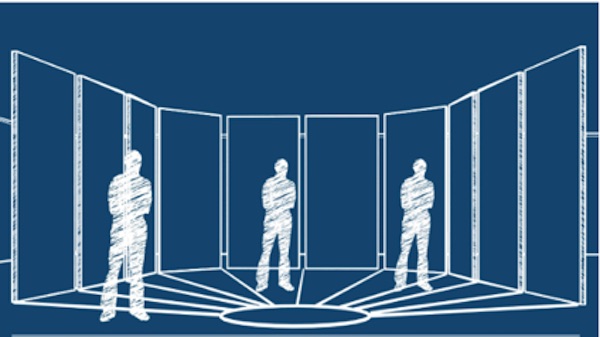

Experiencing in Pieces is an interactive, hybrid reality installation that allows the audience to deconstruct and reconstruct a piece of music composed by Jeremiah and Michael Roberts. De/reconstruction occurs when a participant’s body passes in front of a series of projectors and Microsoft Kinects in a mediated space. The idea behind this project is to provide the user an active role in the exhibit by allowing them to become part of the performance. One can control how the instruments and videos of those instruments are played, the volume of the instrument, and the opacity of the video according to their physical location in relation to the Microsoft Kinect; that is, the closer participants are to the Kinect, the louder the music plays and the more vibrantly the video of the dancer appears via the projectors. Experiencing in Pieces should be viewed both as a study of the human body in motion and as a means to explore the oscillation between sound, embodiment, and physical computing. As such, it affords participants unique opportunities for phenomenological experiences via the Kinect and TouchDesigner software.

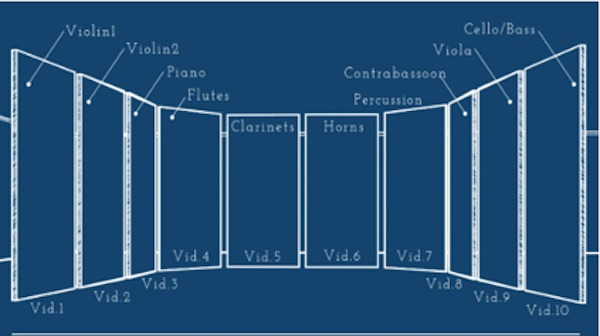

To begin, Experiencing in Pieces used 10 projectors on a 270-degree wall. A final musical score comprised of ten digital tracks were then digitally recorded and separated within TouchDesigner in such a way that they were mapped according to the location of each projector. Each instrument recording (flute 1, flute 2, a piano, another flute, a clarinet, percussion, horns, contrabassoon, viola, and cello) was then paired with a video of a specially choreographed dance for that instrument. The room itself, which was furnished with a Dolby Digital 5.0 sound system, was arranged the same way a live orchestration would have been set up on stage: clarinets and percussion in the middle, violas on stage right, cellos on stage left. This was done to emulate a real orchestra setting—for example, when positioned on stage left one would mainly hear cellos.

The music itself plays within the TouchDesigner software so that it never stops, and two Microsoft Kinects are placed at the back of the room facing the “performance viewing area”—this allows the Kinects to pick up and track the body when it enters the area. When no participants are present, the audio remains at “0” level volume and the video pieces on the projectors are simply replaced with audio spectrums to represent the area where sound and video can be present, so long as it has a human trigger. When a participant steps into the presence of the Kinect, the volume of the instrument and opacity of the video raise until the audio can be heard and video seen. In order to receive the full experience, ten bodies must be present in front of each panel, though it is possible for one to stand between two panels to experience the audio/video at half the level than if they stood completely in front of one panel. The installation itself allows for different amounts of people to create different sounds with different instruments by standing in various locations; for example, three people can stand between the two violins and a horn to have one experience, then move to the piano, flutes, and contrabassoon to have a completely different experience. Quite literally, Experiencing in Pieces allows members of the audience to compose the musical score as they see fit and engage with it in a multitude of ways.

Experiencing Sonic Compositions

In her article “(Re)Educating the Senses: Multimodal Listening, Bodily Learning, and the Composition of Sonic Experiences,” Steph Ceraso attempts to “reimagine the ways that we teach listening to account for the multiple sensory modes through which sound is experienced in and with the body” (Ceraso, 2014, 103). Through the idea of multimodal listening, or “how we think about and practice listening as a situated, full-bodied act,” students can explore how “sound works as a mode of composition to create particular effects and affects” on themselves or on an audience (103). Throughout the piece, she suggests many ways that multimodal listening involves not just the ears, but the entirety of the body and how, in turn, listening may heighten ones’ sensorial experiences (106). As a result of practicing the act of full-bodied listening, that which we are hearing (what she refers to as sonic experiences) can lead us to meaningful sensory encounters (109); what might be mundane can be turned into an esthetic experience that leads to change within ourselves or a listener. Learning to become a multimodal listener for the composition classroom can help students “become more thoughtful and sensitive consumers and composers of sound in both digital and nondigital environments,” and consider their own bodies and senses when composing (113).

The ideal assignment for instructors practicing multimodal listening in the classroom asks students to “design sonic compositions that would allow audiences with different preferences or bodily capacities to interact with their work,” and perhaps include “visual and textual options that could be turned on or off by the user” (116). In sum, multimodal listening assignments bring into account the entirety of the body when creating a sonic composition. As a result, students practicing multimodal listening will gain a deeper understanding of sound “as an integral part of the texts, products, and environments that they interact with every day” (119).

In a 2015 article by Steph Ceraso and Kati Fargo Ahern titled “Composing with Sound,” the two authors put theory into practice by offering ideas for two projects that show the possibilities of sonic composition. “The Sonic Object,” proposed by Ceraso, “focuses on an object that uses sound to enhance a user’s overall experience” (13). In this assignment, “students design their own sonic objects by sketching a model and talking through how it would work, as well as creating the distinct sounds for their object in an audio editor” (13). In a second project idea, titled “Embodied Soundscape Design” by Fargo Ahern, participants create a soundscape within a classroom by positioning themselves in various locations and using various sounds to create an affective experience via multimodal composition. In each example, the assignments have various goals, such as the enrichment of one’s understanding of the impact sound has on various contexts or the exploration of embodied interaction’s relationship to multimodal experience (15). Each project places an emphasis on the act of multimodal listening within the sonic composition classroom, an act which includes not only the ears but the entire body. Multimodal listening should be explored through sonic compositions to raise awareness of student’s own bodies during the composition process, they argue. The project ideas from “Composing with Sound” seek to accomplish an examination of our selves, whether physically, mentally, or both, and our surroundings, while allowing us to engage with that which we experience on a macro level nearly every day: sound.

Ceraso and Fargo Ahern’s desire for multimodal listening to include the body and its ability to interact with a project to create an affective experience is one that this project, “Sonically Composing Experiencing in Pieces” finds itself deeply indebted to. Experiencing in Pieces displays the possibilities for multimodal listening that Ceraso and Fargo Ahern envisioned by allowing audiences to interact with a sonic composition by positioning themselves in relation to a Microsoft Kinect that, in tandem with TouchDesigner, allows their body to act as a trigger to play a musical score. In turn, the body-as-trigger within this musical/sonic composition leads to phenomenological experiences that are so prevalent within sound studies by giving the user a role in the outcome of what is played.

Sonic Transduction

In her work “Body,” Bernadette Wegenstein builds off scholars such as Maurice Merleau-Ponty (1945) and Katherine Hayles (1999) to justify the body as a medium, as well as to differentiate between body and embodiment. Citing Hayles’ How We Became Posthuman, Wegenstein primarily relies on the idea that “In contrast to the body, embodiment is contextual, enmeshed within specifics of place, time, physiology, and culture, which together compose enactment” (196). For her, embodiment refers to “how particular subjects live and experience being a body dynamically, in specific, concrete ways,” and as a medium the body acts as a communicative device for such individual ideals as gender, age, class, and so on—the phenomenological experience of human beings, if you will (Wegenstein, 2010, 20). The body, then, is the structure of an organism or, perhaps more simply, the vehicle, or medium, through which experiences can occur.

The need to consider embodiment today is, perhaps, more relevant than ever, given the pervasiveness of digitally mediated technologies and our connection to the digital realm. No longer are our experiences simply lived through the material world. Instead, “virtual environments expand our range of possible experience” (28). Virtual environments allow us to become “other” and create meaningful experiences via such networked environments as chatrooms, online games, and social media, which in turn have real consequences on our material selves. For example, in his 2018 work “Where is the Body in Digital Rhetoric?” Brett Lunceford calls to question the role that embodiment plays in online environments and seeks to explore the relationship between physical and virtual embodiment and the phenomenological experience created at the fold of the two. Lunceford notes that physical bodies are oftentimes the bodies that are punished for the “actions of the body in a digital world” (146); for example, in instances of “revenge porn,” an oftentimes male will post nude photos of his ex-lover online for revenge, yet the victim is shamed rather than the perpetrator, which has consequences for their material selves. The point, however, is not to paint the coalescence of materiality and virtuality in negative light, but rather to show the very real implications the two can have for one another, and the affordances that this awareness can bring to the creation of a sonic composition.

Sonic compositions raise the question of how we might create a strong phenomenological experience and typically bridge together the virtual and material with sound via digital technologies (audio recording devices, audio editing devices, etc.). Ceraso, for example, believes that in order to compose a sonic experience, we should attempt to utilize the body in such a way that it interacts with the installation or assignment itself—again, highlighting the meld between materiality and virtuality given that these compositions rely on digital technologies. The means by which we can extract sound and thereby point to the ways in which it creates an affective experience on embodiment varies, though typically digital software is used to create and manipulate an audio track.

One such way researchers could collect and create a sonic composition that we believe has been overlooked is through the inclusion of physical computing technologies, as they afford users a means to blend materiality and virtuality. As the “practice of combining hardware design with computer programming to create networked, interactive devices and environments,” physical computing devices (for example, Arduinos, cellphones, and Fitbits) are characterized by their ability to respond to the physical world in some way, using a combination of hardware sensors and software (Belojevic and Macpherson, 2018, 258). Such devices offer a means for examining the interaction between bodies, technology, and the environment, and we believe they work especially well for sonic compositions and allow for the possibility to create affective, embodied experiences with a sense that most engage with each day.

Take, for example, the Microsoft Kinect v2, the physical computing technology of choice used by Jeremiah in his project Experiencing in Pieces. The Kinect itself acts as a physical computing device through its capabilities of skeletal tracking and feeding, or transducing, embodied data into computing projects. Defined by O’Sullivan and Igoe (2004), transduction is a “conversion of one energy form into another,” and is evident in the Kinect in such instances as the “mediation of the user’s body into a three-dimensional “skeleton” created with the combination of the Kinect’s infrared projector and infrared sensor” (xix; Halm, forthcoming 2019). Incorporating the multimedia software TouchDesigner, Experiencing in Pieces affords participants of the installation an opportunity to transduce their bodies into an algorithmic trigger within the software to create an embodied, sonic experience envisioned by Steph Ceraso and others in the sound studies field. Ultimately, the body-transduced leads to a phenomenological experience with the sonic composition itself by allowing users to compose the musical composition in their own unique way depending on their physical location in reference to the Kinect’s sensors. Physical computing technologies afford us a means to transduce our bodies in such a way that we become part of the sonic composition. This goes beyond digital-mixing software typically used by sound scholars to include full-bodied practices.

Further Considerations and the Future of Experiencing in Pieces

Experiencing in Pieces and future projects inspired by this kit epitomize what Ceraso is calling for her in article “(Re)Educating the Senses” by putting the body at the forefront of a sonic composition. This installation shines most given its ability to include differently-abled bodies. For example, as long as one is capable of being in the presence of the Kinect, the installation will work. The inclusion of an audio and video track appeals to those that may lack one or the other sense. Ultimately, though, Experiencing in Pieces showcases the ways that future scholars interested in sound studies can utilize physical computing technologies to engage audiences in sonic compositions while appealing to a variety of audiences.

The future of Experiencing in Pieces lies with its creator and co-writer of this article, Jeremiah Roberts. Ultimately his hope is to re-create the pieces in a setting that is larger than the 10-panel display he did initially and attempt to make it as large of an installation as possible: more projectors, more panels, more Kinects, more musical pieces, and so on. But while Jeremiah’s piece requires an art installation space and likely exceeds the budget of the typical classroom assignment, our miniature version presented in this kit is much more realistic, relying only on the hardware (e.g., a laptop capable of running TouchDesigner and the Kinect v2) and a classroom-like space large enough that allows participants to stand in front of the Kinect (approximately 3-4 feet). We envision this smaller project being used in such a way that students come together in groups of 4 to create their own installation inspired by multimodal listening scholarship; groups of four would cut down on the cost of a Microsoft Kinect v2 (~$20.00 per student) and allow students to think through elements of space and how, for example, different sections of a classroom may trigger different sounds when passed through (though it should be mentioned this would require further knowledge of TouchDesigner’s interface). Other physical computing technologies can certainly be used with TouchDesigner such as an Arduino and the Orbbec Astra, and we encourage instructors and students to branch out and find creative ways to explore sonic compositions using said technologies.

Works Cited

Belojevic, Nina, and Shaun Macpherson. 2018. “Physical Computing, Embodied Practice.” In The Routledge Companion to Media Studies and Digital Humanities, edited by Jentery Sayers. New York: Routledge.

Ceraso, Steph. 2014. “(Re)Educating the Senses: Multimodal Listening, Bodily Learning, and the Composition of Sonic Experiences.” College English 77 (2): 102–23.

Ceraso, Steph, and Kati Fargo Ahern. 2015. “Composing with Sound.” Composition Studies 2 (43): 13–18.

Halm, Matthew. Forthcoming 2019. “Transducing Posthuman Bodily Inscription Prices.” In Trace Journal 4. N.pag.

Hayles, N. K. 1999. How We Became Posthuman. Chicago: The University of Chicago Press.

Lunceford, Brett. 2018. “Where Is the Body in Digital Rhetoric?” In Theorizing Digital Rhetoric, edited by Aaron Hess and Amber Davisson. New York: Routledge.

Merleau-Ponty, Maurice. 1962. The Phenomenology of Perception. Edited by Colin Smith. New York: Routledge & Kegan Paul.

O'Sullivan, Dan, and Tom Igoe. 2004. Physical Computing: Sensing and Controlling the Physical World with Computers. Boston: Cengage Learning, Inc.

Wegenstein, Bernadette. 2010. “Body.” In Critical Terms for Media Studies, edited by W.J.T. Mitchell and Mark B.N. Hansen, 19–34. Chicago: University of Chicago Press.